Building Privacy-Preserving Deep Learning Systems using Differential Privacy and Federated Learning

Event: Senior Division

Category: Software and Embedded Systems

Student: Ananya Gangavarapu

Table: COMP1901

Experimentation location: Home

Regulated Research (Form 1c): No

Project continuation (Form 7): No

Abstract:

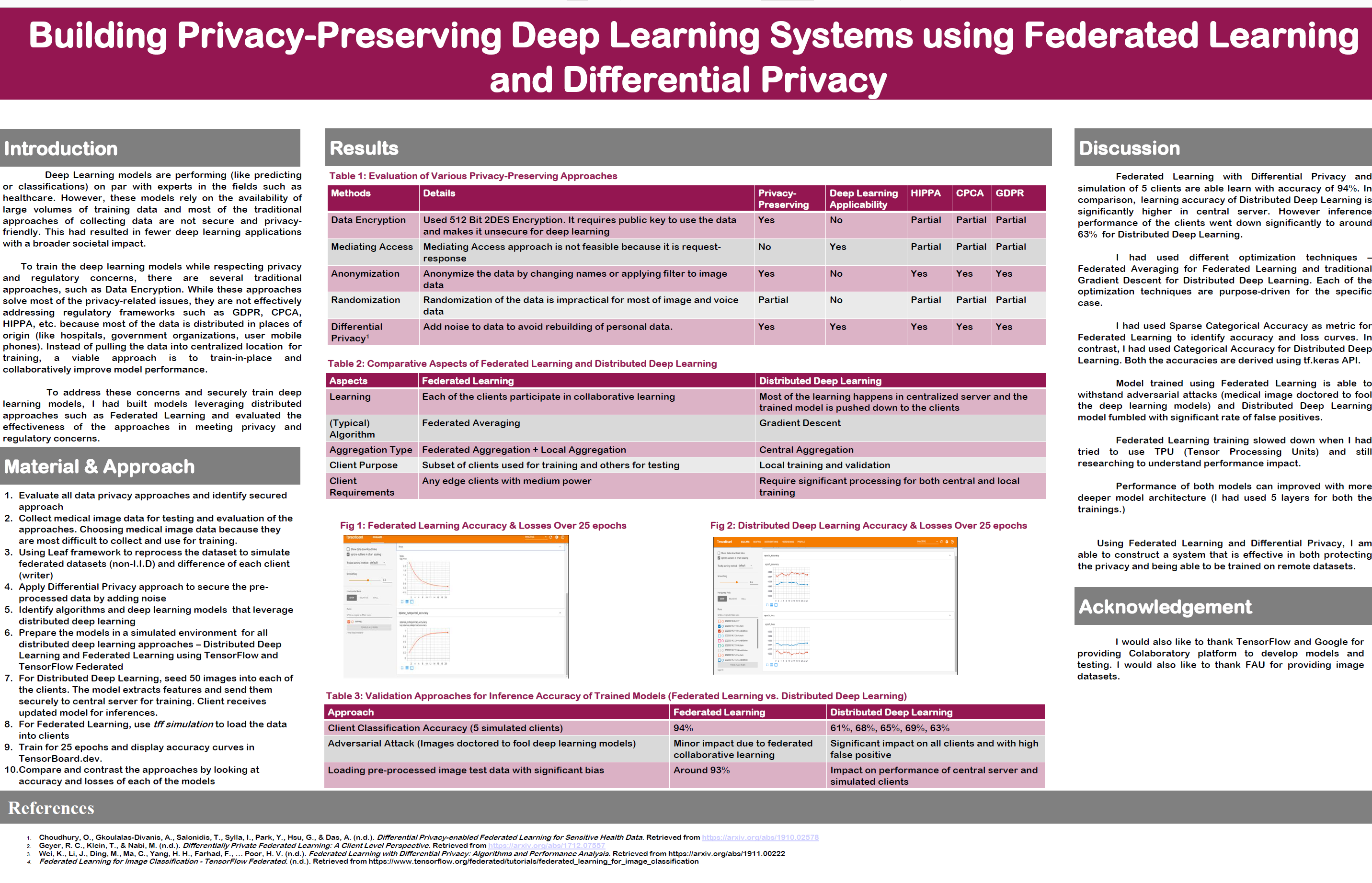

Deep Learning and Artificial Intelligence can be as effective as the underlying data used to train the model. Given the growing concern of privacy violations and increased regulations around the usage of data, a lack of relevant data is impeding the growth of deep learning systems. This problem is more acute in healthcare where most of the data is locked in different hospitals, insurance companies, and patient health records. Federated Learning provides a secure way to train deep learning without the data being pulled into one place for training. Federated Learning with Differential Privacy provides a privacy-preserving approach to training and can provide higher levels of prediction accuracy on par with the traditional approaches. I am able to achieve around 87% accuracy with deep learning models trained with fundus image datasets distributed across in edge devices (mobile phones) and using federated learning.Bibliography/Citations:

No additional citationsAdditional Project Information

Project website: -- No project website --

Presentation files: -- No files provided --

Research paper:

Additional Resources: -- No resources provided --

Project files: -- No files provided --

Research Plan:

How to train and build deep learning systems without jeopardizing the privacy and regulatory concerns?

Deep Learning systems are as good as the data being fed into the training of the underlying models and data, especially in healthcare, is very difficult to acquire because of privacy and regulatory concerns. Also, data is locked in different hospitals, insurance companies, and research laboratories.

Goals / Expected Outcomes

Build an effective and secure approach for accurate training deep learning models while addressing privacy concerns and leveraging distributed datasets.

Hypothesis

Federated Learning with Differential Privacy provides a secure approach to train the models with distributed datasets.

Procedure

- I would like to start evaluating data security practices currently available and check if any of the traditional approaches are effective in training. Following are key security practices:

- Data Encryption

- Data Anonymization

- Data Randomization

- Data Federation

- Differential Privacy

- Identify all the privacy and health regulations related to using healthcare data

- HIPPA

- GDPR

- California Privacy Act

- Identify deep learning requirements against data security approaches and regulatory frameworks

- Assess and score the effectiveness of each of the approaches for deep learning

- Setup distributed environment for validating distributed deep learning approaches and Federated Learning

- Collect and seed medical fundus image data in the distributed environment. Make sure that none of the edge devices (mobile phones or Raspberry PI with Coral) have more than 50 images

- Develop Deep Learning model using TensorFlow Federated Learning (TFF) and deploy in Google Colaboratory

- Train using TFF model and with differential privacy and validate the model for accuracy

- Deploy a distributed model built using CNN in a central server and deploy images in edge devices. Make sure that images do not exceed 50 in each of the devices. Add noise to the images and send them to the centralized model for training. Validate the accuracy of distributed deep learning model

- Compare accuracies of Federated Learning Model with Distributed Deep Learning

References

- https://ai.googleblog.com/2017/04/federated-learning-collaborative.html

- https://privacytools.seas.harvard.edu/files/privacytools/files/pedagogical-document-dp_new.pdf

- https://medium.com/syncedreview/federated-learning-the-future-of-distributed-machine-learning-eec95242d897

- https://towardsdatascience.com/the-new-dawn-of-ai-federated-learning-8ccd9ed7fc3a

- https://blogs.nvidia.com/blog/2019/10/13/what-is-federated-learning/

- https://www.tensorflow.org/federated/tutorials/federated_learning_for_image_classification

- https://www.tensorflow.org/federated/tutorials/simulations

- https://arxiv.org/pdf/1902.01046.pdf